IN THIS ISSUE

AI as a delegated decision system — and why authority must be engineered

The hidden coordination cost of scaling cross-functional AI

Sponsor drift, metric fragmentation, and informal override culture

Decision topology diagrams: from hierarchy to distributed control surfaces

Regulatory and socio-technical signals reshaping accountability

How to assess whether your AI system is structurally stable

Abstract

Artificial intelligence systems do not fail only because of poor models, bad data, or unstable infrastructure.

They fail because no one knows who is allowed to decide.

In production environments, AI systems alter how decisions are made, who makes them, and on what authority. They introduce new inference paths, new forms of delegation, and new feedback loops. Yet in many organizations, decision rights remain implicitly inherited from pre-AI structures.

The result is predictable:

Models are deployed without clear ownership.

Outputs are used without defined accountability.

Failures propagate without a designated authority to intervene.

This is not a governance failure in the narrow sense. It is a coordination failure rooted in undefined decision rights.

AI is not just a computational layer. It is a decision-amplification system embedded inside organizational authority structures. When those structures are ambiguous, the system becomes unstable.

AI Systems Reallocate Authority

In traditional systems, authority is relatively legible.

A report is generated.

A manager reviews it.

A decision is made.

Responsibility is traceable.

AI systems compress and obscure this chain.

A model produces a recommendation.

The recommendation is embedded into a workflow.

A human approves it, or fails to override it.

The system executes at scale.

Authority becomes distributed across:

Data owners

Model developers

Infrastructure teams

Product managers

Compliance

Operational users

But the right to decide, to override, to halt, to redefine; is rarely codified.

Figure: Decision flow in a traditional system.

Decision authority is concentrated at Manager Review.

Now contrast that with an AI-mediated system.

Figure: AI-mediated decision flow (undefined authority).

Authority is now implicit and distributed.

No single node clearly owns the final decision.

This ambiguity is tolerable during pilots.

It becomes destabilizing in production.

The Three Decision Rights That Must Be Defined

In practice, AI systems require explicit ownership of three distinct decision rights:

Decision to Deploy

Who authorizes the system to influence outcomes?Decision to Override

Who has authority to interrupt or bypass model outputs?Decision to Redefine

Who can change the objective, retrain the model, or alter its evaluation criteria?

Most organizations define the first.

Few define the second.

Almost none clearly define the third.

The absence of override and redefine rights creates two common pathologies:

Model drift without intervention

Escalation paralysis during failure

When no one clearly owns the authority to halt or redefine a system, teams default to inaction.

The Coordination Tax of Cross-Functional AI

AI systems are inherently cross-functional:

Data originates in one domain

Models are developed in another

Products embed outputs elsewhere

Compliance oversees constraints

Operations absorb consequences

Each boundary introduces a coordination tax.

If decision rights are not explicitly assigned at each boundary, responsibility diffuses.

Diffused responsibility produces:

Slow incident response

Quiet degradation of model performance

Political conflict after visible failure

Informal override behaviors (shadow controls)

This tax increases with scale.

Small systems survive on goodwill and informal trust.

Scaled systems require formal authority maps.

Governance Is Not the Same as Authority

Many organizations assume governance committees solve this problem.

They do not.

Governance bodies often:

Review policies

Approve documentation

Evaluate compliance

But they rarely own operational override authority.

Operational authority must live inside the execution path.

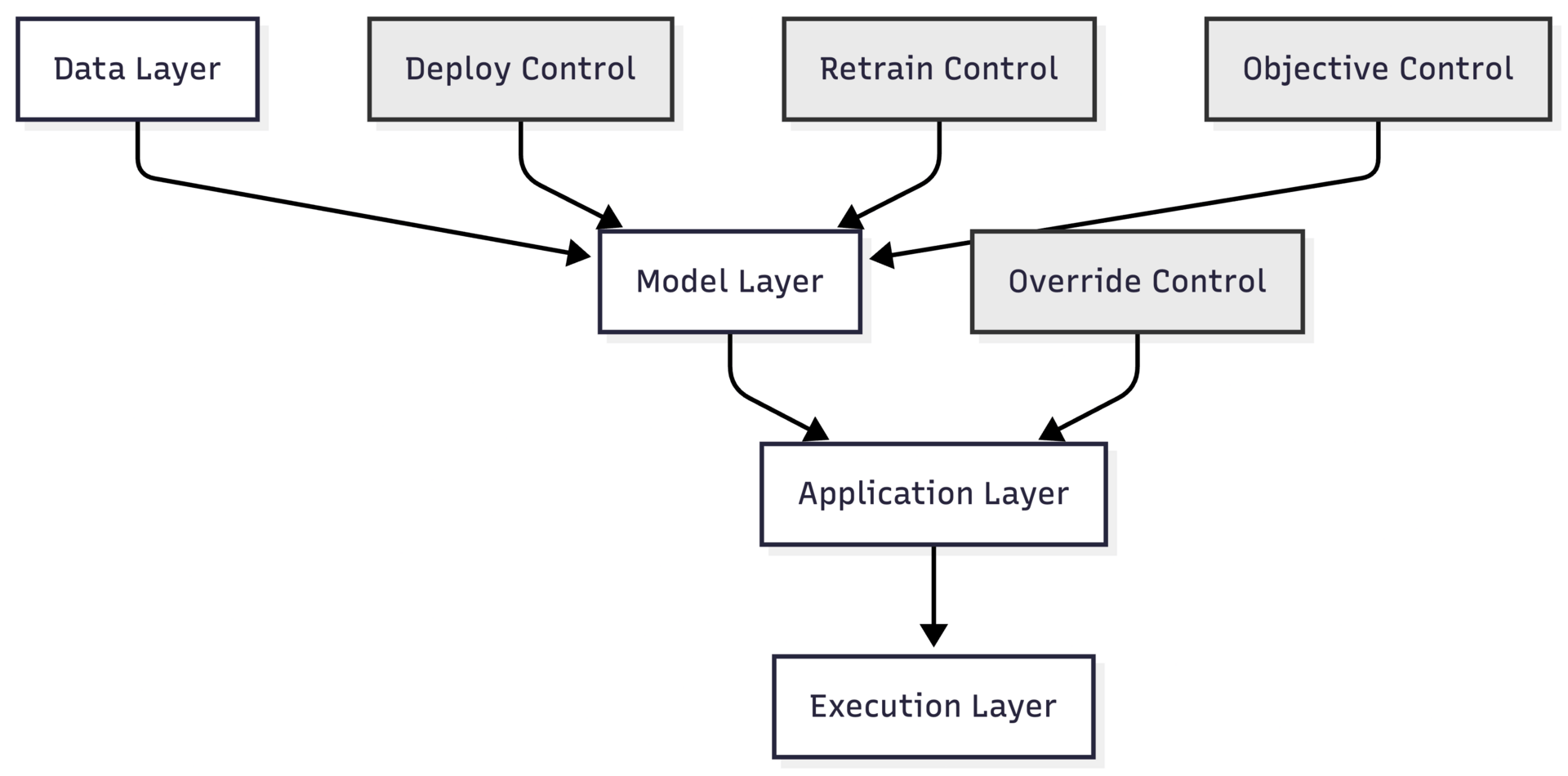

Figure: Decision rights as control surfaces.

Control surfaces must be:

Named

Owned

Testable

Without this, AI systems operate in a governance vacuum.

Failure Case: Undefined Override Authority

Consider a pricing optimization model.

The data team owns training data.

The ML team owns model updates.

The product team owns integration.

Sales teams consume outputs.

Finance monitors revenue variance.

The model begins drifting due to subtle market changes.

Sales notices anomalies.

Finance sees volatility.

The ML team waits for formal retraining cycles.

Product assumes performance metrics are within tolerance.

No one has explicit authority to suspend inference.

Revenue impact accumulates quietly.

By the time intervention occurs, the system has eroded trust across departments.

The technical problem was manageable.

The coordination problem was not.

In the practitioner edition, this editorial includes:

A formal decision-rights matrix for AI systems in production

A reusable RACI variant tailored for model deployment and oversight

Failure-mode mapping for undefined override authority

A step-by-step method to audit decision surfaces in existing systems

A higher-resolution governance control diagram with escalation paths

These extensions do not change the argument. They make its consequences explicit.

IMPLEMENTATION BRIEF

Patterns & Failures

The Coordination Tax of Cross-Functional AI

AI systems rarely live inside a single function.

Data originates in one domain.

Models are developed in another.

Products embed outputs elsewhere.

Operations absorb consequences.

Compliance monitors exposure.

Each boundary introduces coordination overhead.

This overhead is not accidental. It is structural.

Why AI Is Inherently Cross-Functional

Most traditional software systems align to a product boundary. Ownership is relatively clean:

Product defines requirements.

Engineering builds.

Operations runs.

Finance evaluates outcomes.

AI systems fracture this alignment.

They require:

Domain data expertise

Statistical modeling expertise

Infrastructure support

Product integration

Business judgment

Regulatory oversight

No single function owns all of these competencies.

As a result, AI systems are distributed across authority domains by default.

The Coordination Tax Defined

The coordination tax is the accumulated friction introduced when multiple authority domains must align before an AI system can:

Deploy

Update

Override

Redefine

This tax appears in four places:

Specification Misalignment

Product goals differ from model optimization targets.Update Latency

Retraining cycles depend on cross-team scheduling.Incident Ambiguity

No clear authority during performance degradation.Economic Diffusion

Costs sit in one budget; benefits in another.

Individually, these frictions seem manageable.

Collectively, they slow iteration and obscure accountability.

Coordination Tax Across the Lifecycle

Figure: Cross-functional coordination points.

Every dashed edge represents an alignment requirement.

In small pilots, these edges are informal.

In production, each edge becomes a dependency queue.

The coordination tax grows nonlinearly with:

System scale

Regulatory exposure

Economic impact

Hidden Symptoms

Organizations often misdiagnose coordination tax as:

“Model performance issues”

“Data quality problems”

“Slow MLOps”

“Cultural resistance”

But the underlying issue is structural:

Too many stakeholders must agree before action is taken.

When coordination cost exceeds perceived benefit, AI systems stall.

This explains why:

Pilots succeed but never scale.

Systems degrade quietly without correction.

Executive sponsors lose confidence despite technical viability.

In the practitioner edition, this brief includes:

A coordination-load scoring model for AI systems

A lifecycle map showing where coordination cost compounds

A structural test to detect coordination bottlenecks before deployment

A redesign pattern that reduces cross-functional friction without centralizing control

These extensions do not change the argument. They make its consequences measurable.

FIELD NOTES

From Practice

Patterns of Organizational Breakdown

Over multiple AI implementations, the technical architecture rarely collapses first.

The organizational architecture does.

Across industries and maturity levels, breakdowns cluster around recurring structural patterns. These failures are not dramatic. They are gradual misalignments between authority, incentives, and operational responsibility.

Below are three recurring patterns observed in production AI systems.

Pattern 1 — The Sponsor Vacuum

Symptom:

An executive sponsor approves the pilot. The system reaches production. Ongoing ownership becomes ambiguous.

During exploration, sponsorship is visible and enthusiastic. Once the system stabilizes, the sponsor disengages. Responsibility drifts downward into operational teams without corresponding authority.

When the system encounters its first material failure:

Operations cannot redefine objectives.

Product cannot change economic targets.

ML cannot alter evaluation criteria without approval.

The original sponsor is no longer structurally engaged.

Figure: Sponsor drift after pilot.

Authority fades as operational exposure increases.

This creates a vacuum precisely when accountability must become sharper.

Pattern 2 — Metric Fragmentation

Symptom:

Each function optimizes a different metric.

ML optimizes model accuracy.

Product optimizes engagement or conversion.

Finance monitors cost variance.

Compliance monitors exposure.

Operations monitors stability.

Individually, each metric is rational. Collectively, they create drift.

No single authority owns the integrated outcome.

When performance degrades, teams defend their local metrics rather than examine systemic misalignment.

This produces silent failure.

The system technically “works,” but organizational trust erodes.

Pattern 3 — Informal Override Culture

Symptom:

Operational teams override model outputs informally.

Sales ignores pricing suggestions.

Support agents bypass routing recommendations.

Managers manually adjust forecasts.

Overrides are not logged.

No formal feedback loop exists.

Model retraining does not reflect operational reality.

The system becomes performative.

Leadership believes AI is influencing decisions.

In practice, humans quietly reassert control.

Figure: Shadow override loop.

The absence of visible override authority produces invisible override behavior.

This is not resistance. It is adaptation to ambiguity.

What These Patterns Share

Across all three breakdowns:

Authority is ambiguous.

Escalation paths are unclear.

Incentives are misaligned.

Feedback loops are incomplete.

The failures are organizational before they are technical.

Production AI systems expose weak coordination structures. They do not create them. They amplify them.

In the practitioner edition, these field notes include:

A structural diagnostic template to detect sponsor vacuum risk

A unified outcome-metric framework to reduce metric fragmentation

A formal override logging model that integrates with retraining cycles

An escalation mapping exercise for AI systems in production

These extensions do not change the observations. They make intervention possible.

VISUALIZATION

Applied Systems

Decision Flows and Accountability Structures

AI systems alter decision topology.

They compress latency between signal and action.

They redistribute evaluation across functions.

They embed probabilistic outputs into deterministic workflows.

When decision rights are undefined, the system’s topology becomes unstable.

This visual essay makes that topology explicit.

1. The Assumed Decision Structure

Most organizations assume AI systems fit inside an existing reporting hierarchy.

Figure: Assumed hierarchical control.

In this mental model:

AI is a tool.

Authority flows top-down.

Override is managerial.

This structure rarely matches production reality.

2. The Actual Decision Structure in AI Systems

AI systems introduce lateral authority dependencies.

Figure: Actual cross-domain decision topology.

Authority is no longer vertical.

It is distributed and interdependent.

If no explicit control surfaces are defined, escalation becomes political rather than structural.

3. Where Accountability Breaks

Breakdown occurs at three structural fault lines:

Objective Misalignment — Product defines outcome; ML optimizes proxy.

Economic Misalignment — Cost borne in one domain; benefit in another.

Operational Exposure — Operations absorbs risk without redefine authority.

Figure: Accountability fracture points.

When feedback loops are slow or indirect, misalignment compounds.

4. Control Surfaces as Structural Stabilizers

Stable AI systems define control surfaces at:

Deployment

Override

Retraining

Objective Redefinition

Figure: Explicit control surface model.

Control surfaces:

Must have named owners.

Must be reachable under time constraint.

Must be exercised periodically.

If control is theoretical rather than operational, coordination degrades under stress.

5. From Informal Alignment to Engineered Coordination

AI systems cannot rely on cultural alignment alone.

They require:

Diagrammed authority

Explicit escalation paths

Logged override behavior

Metric traceability

Without these, the system appears coherent until the first non-trivial failure.

Visualizing decision topology is not decorative.

It is a diagnostic tool.

In the practitioner edition, this visualization includes:

A higher-resolution governance control topology with failure propagation paths

A side-by-side diagram: pilot authority structure vs production authority structure

A reusable Mermaid template for decision-surface mapping

A structural stress-test model to simulate authority breakdown

These extensions do not change the diagrams. They make them operational.

RESEARCH & SIGNALS

Organizational Design and Socio-Technical Constraints

AI system failures are frequently framed as technical defects.

Recent research and regulatory signals suggest otherwise.

The more mature the deployment environment, the clearer a pattern becomes:

AI reliability correlates more strongly with organizational structure than with model capability.

Below are five signals relevant to implementers.

Signal 1 — Human-in-the-Loop Does Not Guarantee Accountability

A growing body of post-incident analysis shows that inserting a human approval step does not automatically clarify responsibility.

In many deployments:

Humans approve model outputs without authority to redefine objectives.

Approval becomes procedural rather than substantive.

Overrides are discouraged implicitly through performance pressure.

The structural issue:

Human review without decision rights is compliance theater.

Implication for implementers:

If a human is “in the loop,” they must also have:

Override authority

Escalation path

Protection from economic penalty for override

Otherwise, the loop is decorative.

Signal 2 — Socio-Technical Research on Distributed Responsibility

Organizational research consistently demonstrates:

When responsibility is distributed across domains without clear authority mapping, response latency increases nonlinearly under stress.

This is observable in:

Incident response systems

Aviation safety

Financial risk governance

AI systems exhibit the same property.

Figure: Responsibility diffusion under stress.

Latency is not technical.

It is structural.

Signal 3 — Regulatory Emphasis on Accountability Traceability

Emerging regulatory frameworks increasingly emphasize:

Named accountability

Auditability of decision pathways

Documentation of override logic

The emphasis is shifting from “model fairness” to “organizational accountability.”

For implementers, this means:

Documentation must describe not only model behavior, but:

Who is authorized to change it

Who absorbs economic impact

Who communicates with regulators

Regulators are implicitly testing decision-right clarity.

Signal 4 — Scaling Failures in Mature AI Organizations

Public case studies of AI rollbacks in large enterprises frequently cite:

Cross-functional misalignment

Undefined business ownership

Slow incident escalation

Not model inadequacy.

As AI systems become economically material, coordination cost becomes visible.

This reinforces the thesis of this issue:

Technical sophistication does not substitute for authority clarity.

Signal 5 — The Shift Toward AI System Ownership Roles

A notable structural trend: the emergence of roles such as:

AI System Owner

Responsible AI Lead

Model Risk Officer

These roles signal recognition that AI systems require durable authority nodes.

However, without integration into operational workflows, such roles risk becoming advisory rather than decisive.

The existence of a title does not guarantee authority.

In the practitioner edition, these research notes include:

A regulatory-aligned accountability mapping template

A comparative matrix of advisory vs operational AI oversight roles

A diagram showing traceability from model output to executive accountability

A cross-signal synthesis translating research into system design implications

These extensions do not change the signals. They convert them into design requirements.

SYNTHESIS

Coordination as the Hidden Constraint

Across this issue, we have examined decision rights, coordination tax, organizational breakdown patterns, and accountability topology.

The common thread is structural:

AI systems fail less often because of incorrect predictions and more often because of undefined authority.

When AI is introduced into an organization, it does three things simultaneously:

Compresses decision latency

Redistributes evaluation across functions

Increases economic exposure of automated judgment

If decision rights are not redesigned to match these shifts, misalignment accumulates.

This is not a cultural problem.

It is an architectural one.

The Structural Arc of Volume 1

Issue 4 reframed governance as architecture.

Issue 5 exposed scaling and reliability constraints.

Issue 6 surfaces the coordination layer beneath both.

Governance fails when authority is unclear.

Scaling fails when coordination cost compounds.

Reliability degrades when override rights are ambiguous.

These are not separate problems.

They are manifestations of the same structural omission:

Decision rights were never engineered.

The Coordination Equation

Production AI viability depends on three variables:

Technical Validity (Does the system work?)

Economic Coherence (Does value exceed cost?)

Authority Clarity (Can the system be controlled under stress?)

Most organizations optimize the first two.

The third determines survivability.

Figure: AI system viability triangle.

If any vertex weakens, the system destabilizes.

Authority clarity is the least visible, and most neglected: constraint.

From Informal Alignment to Engineered Coordination

In early-stage AI initiatives, alignment is informal:

Enthusiastic sponsorship

Small teams

Low exposure

Limited regulatory scrutiny

As systems scale:

Exposure becomes material

Override becomes politically sensitive

Metrics fragment

Escalation slows

Informal alignment collapses under load.

Engineered coordination requires:

Named system owners

Explicit override authority

Escalation rehearsals

Metric traceability

Logged intervention behavior

These are not governance artifacts.

They are control mechanisms.

AI systems without control mechanisms are unstable by design.

What This Means for Practitioners

Before launching another pilot, scaling an existing system, or responding to a failure, ask:

Who is authorized to suspend inference today?

Who absorbs economic consequence?

Who can redefine the objective without committee delay?

Is override behavior normal — or politically costly?

Can accountability be diagrammed in under five minutes?

If the answer to any of these is unclear, the system’s constraint is not technical.

It is organizational.

AI maturity is not measured by model sophistication.

It is measured by the clarity of decision rights under stress.

In the practitioner edition, these research notes include:

A consolidated AI Authority Audit checklist

A production-readiness coordination scorecard

A board-level briefing template for AI accountability

A diagnostic model to determine whether to centralize or distribute AI ownership

These extensions do not change the synthesis. They operationalize it.

The purpose of The Journal of Applied AI is not to track novelty or celebrate technical feats in isolation.

It exists to surface the structural conditions under which AI becomes durable infrastructure rather than temporary advantage.

That requires uncomfortable clarity: about boundaries, costs, controls, and responsibility.

Issue 7 will shift from structural coordination to post-hoc judgment:

What recent AI headlines get structurally wrong.

Rather than reacting to hype cycles, we will examine high-profile AI stories through a systems lens:

Where authority actually failed

Where governance claims obscured structural gaps

Where technical narratives distracted from organizational reality

Authority clarity does not only prevent internal failure.

It determines how organizations interpret, and misinterpret, the broader AI landscape.

Thank you for reading. This journal is published by Hypermodern AI.