IN THIS ISSUE

Why execution-capable AI systems fail without explicit control surfaces

How authority, not model quality, becomes the dominant risk factor once AI can act.The silent failure mode of unstoppable systems

A recurring pattern where no one has the means, or the mandate, to intervene.What practitioners discover only after deployment

Field observations on responsibility without authority and governance learned too late.How authority and risk propagate through real architectures

Diagrammatic analysis of execution paths, lateral movement, and containment boundaries.What recent agentic AI cases reveal about trust and blast radius

A post-hoc analysis showing how risk persists when authority remains unchanged.Signals pointing to a governance bottleneck

Why research, security, and regulation are converging on control, not capability.A synthesis: control surfaces as the unit of trust

What changes when governance is designed into systems rather than assumed socially.

Abstract

As AI systems gain execution authority, their primary failure mode is no longer accuracy but governability. This essay argues that control surfaces, explicit points where decisions can be inspected, constrained, overridden, or revoked; are the missing architectural abstraction in most applied AI deployments. When control surfaces are implicit, organizations substitute trust, intent, or naming for enforceable structure. The result is automation without accountability.

The Shift That Broke the Old Assumptions

Most organizations still reason about AI as if it were a decision-support component. Models recommend. Humans decide. Risk is bounded by review.

That abstraction no longer holds.

Execution-capable systems, agents that can write files, call APIs, trigger workflows, or act across organizational boundaries; collapse the distance between decision and action. Once that distance disappears, the system inherits a new property:

It can do harm faster than governance can respond.

This is not a model problem. It is not a tooling problem. It is a systems design failure rooted in missing control surfaces.

What a Control Surface Is (and Is Not)

A control surface is an explicit, enforceable interface where authority can be:

scoped

inspected

interrupted

revoked

Control surfaces are architectural, not procedural. They exist whether or not anyone is watching.

What they are not:

dashboards

policies

documentation

“human-in-the-loop” as a slogan

If a system can act without passing through a control surface, governance exists only socially.

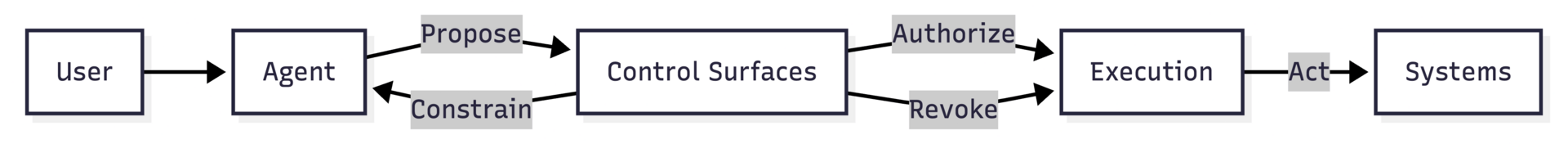

Control Surfaces as a First-Class System Layer

Figure: Control surfaces mediate authority between decision and execution. Without them, governance cannot act in real time.

Where Applied AI Systems Commonly Fail

Across production systems, the same pattern repeats:

Authority is delegated broadly (“the agent needs to be useful”)

Constraints are assumed implicitly (“we’ll notice if something goes wrong”)

Intervention paths are undefined (“we’ll just turn it off”)

Accountability is discovered post hoc

This is irreversible delegation: once granted, authority persists longer than intent, attention, or institutional memory.

The system works, until it doesn’t.

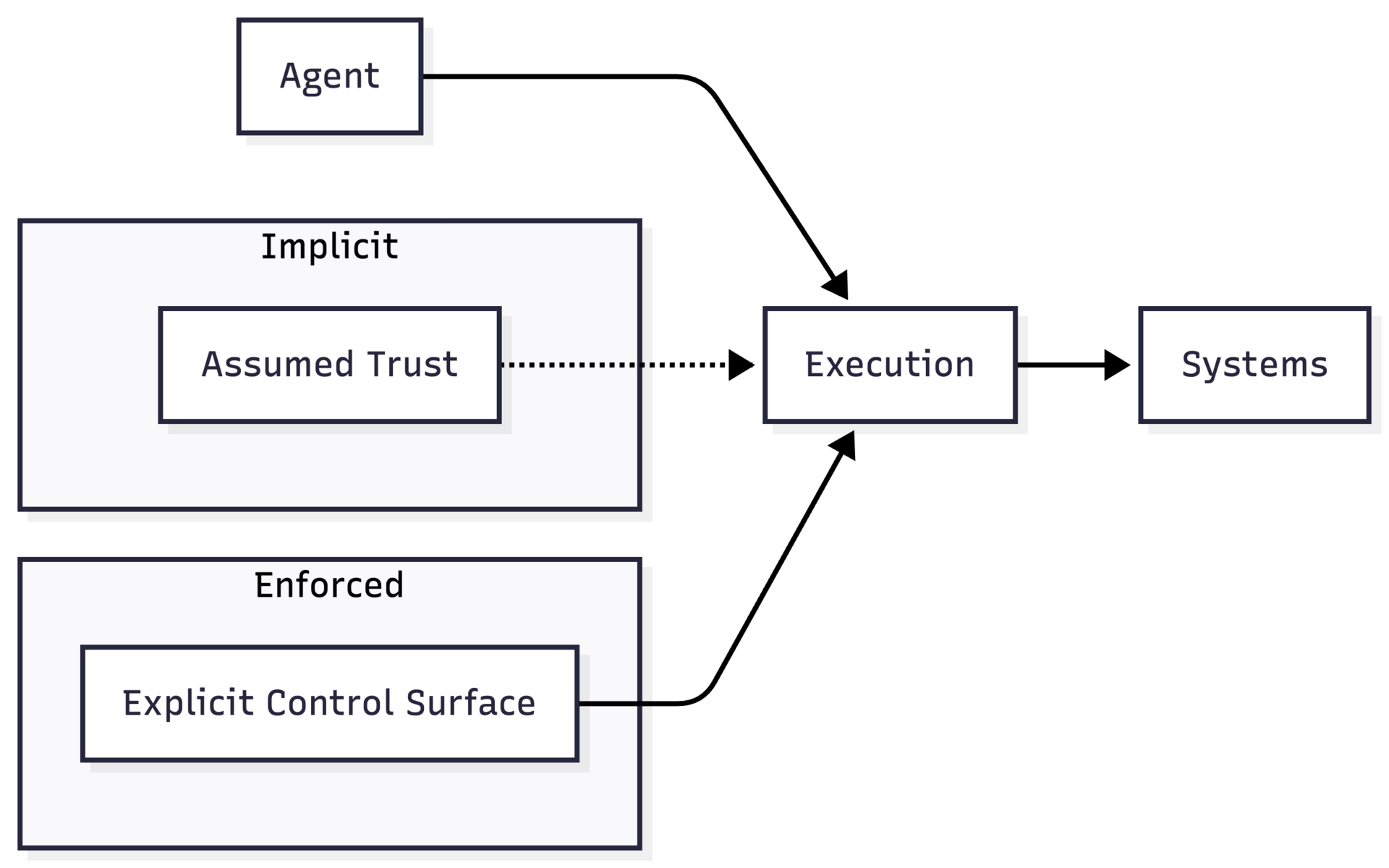

Implicit Trust Is Not a Control Surface

Many AI systems rely on implicit trust to fill architectural gaps:

trust in the model

trust in the developer

trust in user intent

trust in naming or branding

Implicit trust scales socially. AI systems scale computationally.

That mismatch is the root of most high-profile failures.

When trust is implicit, the system cannot distinguish between:

legitimate use and misuse

authorized action and abuse

experimentation and production

By the time humans recognize the difference, the system has already acted.

Implicit Trust vs Enforced Control

Figure: Implicit trust influences behavior socially. Control surfaces constrain behavior architecturally.

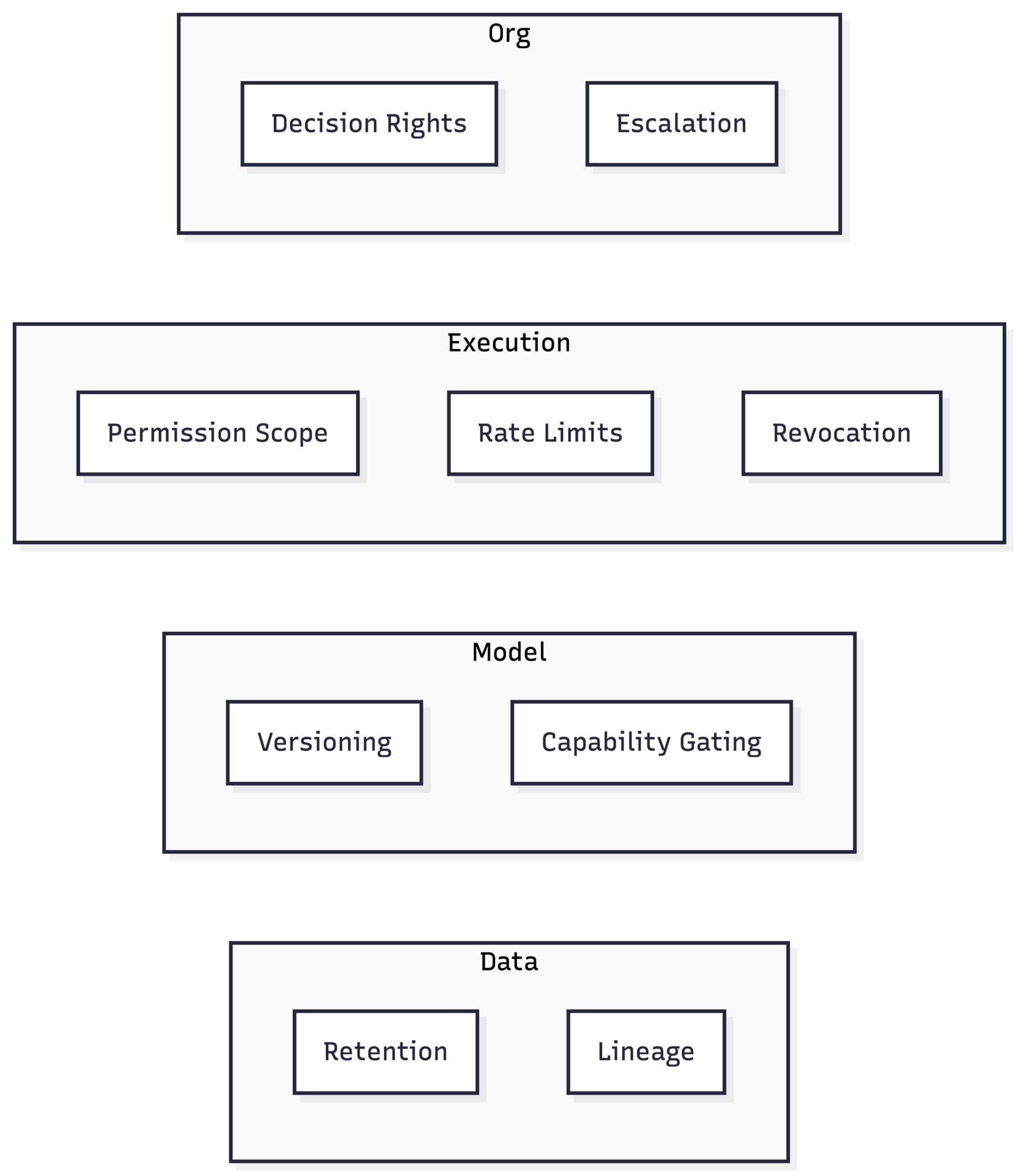

Control Surfaces Exist at Multiple Layers

Treating control as a single “kill switch” is itself a failure mode.

Effective systems expose control surfaces at multiple layers:

Data: lineage, retention, deletion authority

Model: versioning, rollback, capability gating

Execution: permission scoping, rate limits, revocation

Organization: decision rights, escalation paths

Missing any one of these creates a bypass.

Governance is only as strong as the weakest control surface.

The Cost of Retrofitting Control

Organizations often respond to failures by adding:

policies

reviews

approval steps

These are compensatory controls layered after deployment.

But once execution authority is embedded, retrofitting control is expensive and brittle. The system has already been designed around autonomy.

This is why early architectural decisions matter more than later governance efforts.

In the practitioner edition, this essay includes:

A control-surface taxonomy mapped to data, model, execution, and org layers

A failure-mode table showing how control surfaces erode over time

Design questions to test whether an AI system is governable before deployment

These extensions do not change the argument. They make its consequences explicit.

IMPLEMENTATION BRIEF

Patterns & Failures

The Silent Failure Mode: When No One Can Stop the Model

This brief examines a recurring applied AI failure pattern: execution-capable systems deployed without a clear, enforceable stop condition. These systems often function correctly, until the moment intervention is required. At that point, organizations discover that no one has both the authority and the means to stop them.

The Pattern in Practice

The failure rarely announces itself.

An AI system is deployed to automate real work: triggering workflows, updating records, sending messages, executing commands. It operates within expected parameters for weeks or months. Confidence builds. Attention drifts.

Then something changes:

an upstream dependency behaves differently

an input distribution shifts

a user exploits an edge case

an external system fails

The system continues to act.

At this moment, teams attempt to intervene, and discover there is no clear control surface through which to do so.

What “Stopping the Model” Actually Means

In practice, “stop” can mean several different things:

pause execution

revoke permissions

limit scope

roll back state

disable specific actions

Many systems support none of these cleanly.

Instead, intervention relies on:

killing a process

revoking credentials globally

shutting down an entire service

These are blunt instruments. They trade localized risk for systemic disruption.

Execution Without an Intervention Path

Figure: The system has a continuous execution path but no enforced intervention surface. Intervention exists only conceptually.

Why This Failure Is Silent

This pattern persists because:

Most of the time, nothing goes wrong

The absence of a stop condition is invisible during normal operation.Human-in-the-loop is assumed, not enforced

Oversight exists socially, not architecturally.Intervention paths are undefined until needed

By the time they are discussed, the system is already acting.Responsibility is distributed, authority is not

Many people feel accountable. No one can act.

Common Anti-Patterns That Precede the Failure

“We can always turn it off.”

(No one has tested what “off” means in production.)“It only runs in limited cases.”

(Limits are implicit, not enforced.)“We’ll notice if it misbehaves.”

(Detection lags execution.)“It’s just an internal tool.”

(Internal systems still act on real data and infrastructure.)

Explicit vs Implicit Intervention

Figure: Implicit oversight depends on attention. Explicit controls operate regardless of attention.

The Underlying Structural Cause

This failure mode is not about intent or negligence. It is about misplaced abstraction.

Teams design AI systems around capability (“what can it do?”) rather than interruption (“how do we stop it?”).

In execution-capable systems, interruption is a primary requirement, not a contingency.

In the practitioner edition, this brief includes:

A decision checklist for when an AI system must have an explicit stop surface

A comparison of soft vs hard intervention mechanisms

A short list of questions operators should answer before granting execution authority

These extensions do not change the pattern. They make it operationally visible.

POST-HOC ANALYSIS

When Authority Persists, Risk Persists

Implicit Trust, Delegated Lateral Movement, and Irreversible Authority in Autonomous Systems (Clawbot → Moltbot → OpenClaw)

Purpose

This post-hoc analysis examines a visible agent lineage (Clawbot → Moltbot → OpenClaw) as evidence of a repeatable structural failure in agentic systems. The focus is not on exploit mechanics, naming choices, or attribution. It is on how execution authority and lateral movement were granted without enforceable control surfaces, allowing risk to persist unchanged across contextual shifts.

What Changed and What Persisted

Across this system’s evolution, many surface attributes changed: name, narrative framing, and external references. One property remained invariant: the authority model.

The system continued to operate as an execution-capable agent with broad local permissions, able to invoke tools that affect real state: filesystems, browsers, APIs, and command execution. These properties determine governance outcomes. Identity and description do not.

From a systems perspective, risk follows authority topology, not labels.

The Structural Failure, Precisely Stated

The failure is not that vulnerabilities existed.

The failure is pre-delegated authority combined with delegated lateral movement, without enforceable control surfaces.

Once execution authority was granted:

actions could occur faster than detection

scope exceeded original intent

a single flaw inherited a wide blast radius

revocation depended on operator attention

Exploit sophistication is secondary.

Blast radius is determined by authority scope.

Authority Scope Determines Blast Radius

Figure: Execution authority flows directly from agent to action. Governance exists conceptually, not as an enforced control surface. Any flaw inherits the full authority graph.

Governance Is Invariant to Naming

Naming, branding, and narrative framing do not participate in execution paths.

Governance properties are determined by:

how authority is scoped

where it can be exercised

how it can be revoked

If those properties remain unchanged, risk remains unchanged, regardless of how the system is referred to or explained.

This is not a criticism of renaming. It is a statement of architectural fact:

governance outcomes are invariant under identity changes.

Agentic AI Changes the Risk Model

Agentic systems bundle three properties that traditional systems kept separate:

Decision authority

Execution capability

Lateral movement

In non-agentic systems, moving laterally across systems typically requires multiple independent steps, credentials, and approvals. In agentic systems, lateral movement is often pre-authorized as a feature.

The consequence is structural:

A single initial flaw can propagate operational impact across systems that previously required distinct controls.

This is why vulnerabilities that would be contained in conventional software become systemic in agentic environments.

Local Execution Inverts Defensive Assumptions

Local agents collapse perimeter-based security models:

centralized revocation weakens

audit becomes optional

capability scope becomes coarse

response depends on human availability

In this context, governance must be embedded into execution paths. External assurances, best practices, or vulnerability scoring cannot compensate for missing control surfaces.

What This Case Confirms

This lineage confirms three durable properties of execution-capable AI systems:

Authority persists longer than attention

Implicit trust is not enforceable

Delegated lateral movement magnifies every flaw

These are architectural facts, not surprises. Treating them as operational anomalies is itself a governance failure.

In the practitioner edition, this analysis includes:

A security-oriented control-surface checklist for agentic systems

Named failure modes specific to local execution and lateral movement

Governance questions teams should answer before delegation

These extensions do not add facts. They make the risks actionable.

FIELD NOTES

From Practice

“We Assumed Someone Else Could Stop It”

Purpose

This field note synthesizes recurring observations from applied AI deployments where execution-capable systems were introduced without explicit control surfaces. The focus is not on a single failure, but on the organizational discovery process that follows: teams learn, often too late, that authority was granted without clarity about who could intervene, when, or how.

All examples are anonymized and composited.

The Moment of Realization

The moment is rarely dramatic.

An AI system has been running successfully for some time. It automates routine work. It saves time. It becomes background infrastructure.

Then an operator notices something “off”:

an unexpected action

a workflow triggered out of sequence

a change no one recalls approving

The immediate response is procedural: Who owns this? Who approved it?

Only later does the deeper question surface:

Who is actually able to stop it?

A Repeating Organizational Pattern

Across teams and industries, the same pattern appears:

Responsibility is shared

Multiple teams feel accountable for outcomes.Authority is implicit

No single role has explicit stop or revoke rights.Intervention is ad hoc

Action depends on finding “the right person” in time.Post-hoc clarity replaces design-time clarity

Control surfaces are discussed only after they are needed.

The system did not malfunction.

The organization did.

Responsibility Without Authority

Figure: Many teams are responsible for outcomes, but no role has explicit, enforceable stop authority.

What Teams Commonly Discover

In post-incident reviews, teams often realize that:

“Human-in-the-loop” was never technically enforced

Pausing the system required full service shutdown

Revoking credentials broke unrelated workflows

Logs existed, but were not actionable in real time

None of these were surprises individually.

Together, they reveal a missing control surface.

The False Comfort of Organizational Proximity

A common assumption is that organizational closeness compensates for missing controls.

Teams say:

“It’s internal.”

“We sit next to each other.”

“We’ll notice quickly.”

In practice, execution-capable systems move faster than coordination. Once authority is embedded, proximity does not scale.

This is where trust silently substitutes for design.

Assumed vs Enforced Intervention

Figure: Organizational awareness influences behavior only indirectly. Control surfaces constrain behavior directly.

The Organizational Cost of Discovery

After the fact, teams respond predictably:

emergency reviews

temporary freezes

new approval meetings

documentation updates

These measures restore confidence, but not control.

The underlying architecture remains unchanged. The same discovery will happen again, under different conditions.

In the practitioner edition, these field notes include:

A cross-case synthesis of organizational breakdowns

A responsibility-to-authority mapping table

“What changed after correction” patterns from real deployments

These extensions do not change the argument. They make its consequences explicit.

VISUALIZATION

Mapping Control Surfaces in Execution-Capable AI Systems

Purpose

This visual essay makes control surfaces legible in execution-capable AI systems. Rather than describing failures abstractly, it shows where authority flows, where it should be constrained, and where missing control surfaces allow risk to propagate.

The diagrams are the primary artifact. The text exists to orient interpretation.

From Decision Support to Execution Authority

Most AI system diagrams stop at inference. Execution-capable systems do not.

The critical transition is the moment when model output becomes action, when decisions directly modify external state. At that point, governance must move from policy to architecture.

Figure: A minimal execution path. Authority flows uninterrupted from decision to action. No control surfaces exist between intent and effect.

This structure is common because it is simple and fast. It is also brittle.

Where Control Must Intervene

Control surfaces are not a single checkpoint. They exist at distinct layers, each constraining a different failure mode.

Figure: Control surfaces mediate authority. They can authorize, constrain, or revoke execution without halting the entire system.

This is the minimal structure required for governability.

Control Surfaces Are Layered, Not Centralized

Treating control as a single “governance layer” obscures where failures actually occur.

Figure: Control surfaces exist across data, model, execution, and organizational layers. Missing any one creates a bypass.

Governance strength is defined by the weakest surface, not the most mature one.

Lateral Movement as a Risk Multiplier

In agentic systems, execution authority often includes the ability to move laterally across systems.

Figure: Lateral movement is pre-authorized. A single decision can propagate across multiple systems without intermediate approval.

This is efficient by design, and dangerous by default.

Containing Lateral Movement

Constraining lateral movement does not require eliminating autonomy. It requires intermediate control surfaces.

Figure: Each boundary has its own control surface. Authority does not automatically propagate.

This structure increases friction intentionally. That friction is governance.

What the Diagrams Make Explicit

Across these diagrams, three structural truths become visible:

Execution is the point of risk concentration

Authority flows unless explicitly interrupted

Governance must be embedded, not observed

These are not policy statements. They are architectural constraints.

In the practitioner edition, this visualization includes:

Higher-resolution versions of each diagram with failure paths

A parameterized control-surface template for agentic systems

Copyable Mermaid diagrams for reuse in design reviews

These extensions do not introduce new concepts. They increase resolution.

RESEARCH & SIGNALS

Signals Pointing to the Control Surface Problem

Purpose

This section interprets recent research, benchmarks, and incident patterns through a single lens: control surfaces, not capability, are becoming the binding constraint in applied AI systems. The goal is not to summarize developments, but to explain why they matter structurally for teams deploying execution-capable AI.

Signal 1 — Agentic Benchmarks Are Outpacing Governance Models

Across recent evaluations of agentic systems, performance gains increasingly come from:

longer action horizons

tool chaining

autonomous retry and correction loops

These benchmarks reward persistence and autonomy, not interruptibility.

Why this matters:

Systems optimized for task completion tend to erase natural pause points. Without explicit control surfaces, higher capability directly increases blast radius.

Signal 2 — Security Research Is Shifting From Exploits to Authority

Recent security analyses increasingly emphasize:

permission scope

trust boundaries

lateral movement

rather than novel exploit techniques.

Why this matters:

This reflects a tacit recognition that what a system is allowed to do matters more than how access is obtained. In agentic systems, authority is often pre-granted, making exploitation less sophisticated but more consequential.

Signal 3 — Local and Self-Hosted AI Is Expanding Faster Than Controls

There is growing adoption of:

self-hosted agents

local execution environments

user-managed automation

These systems deliberately bypass centralized platforms and perimeters.

Why this matters:

Local execution collapses inherited security guarantees. Governance must be embedded in the system, because there is no external authority to rely on.

Signal 4 — “Human-in-the-Loop” Is Being Treated as a Safety Primitive

Across product documentation and deployment guides, human oversight is frequently cited as the primary safety mechanism for autonomous systems.

Why this matters:

Human-in-the-loop is an operational pattern, not a control surface. It depends on attention, availability, and context. As systems scale, this dependency becomes a failure mode.

Signal 5 — Regulation Is Converging on Accountability, Not Models

Regulatory and policy discussions are increasingly focused on:

traceability

responsibility

auditability

rather than model internals.

Why this matters:

This aligns with the architectural reality: regulators care about who can act, when, and with what authority, not how predictions are generated. Systems without explicit control surfaces will struggle to demonstrate compliance regardless of model quality.

What These Signals Have in Common

Taken together, these signals point to a single conclusion:

Applied AI systems are being evaluated, attacked, and regulated based on authority and control, not intelligence.

The gap is not between research and practice.

It is between capability growth and governance design.

In the practitioner edition, these signals include:

A cross-signal synthesis mapping trends to specific control-surface failures

Early indicators teams can monitor to detect governance drift

Design implications for near-term agentic deployments

These extensions do not add new signals. They clarify how to act on them.

SYNTHESIS

Control Surfaces as the Unit of Trust

Purpose

This close synthesizes the issue’s core claim: in execution-capable AI systems, reliability and safety are no longer primarily model properties. They are system properties, determined by whether authority is visible, bounded, interruptible, and accountable. The practical unit that expresses those properties is the control surface.

What This Issue Established

Across the Lead Essay, brief, field note, diagrams, and post-hoc analysis, the same structural pattern recurs:

Execution collapses the distance between decision and action

Authority persists longer than attention

Lateral movement turns local faults into systemic outcomes

“Human oversight” is often assumed rather than enforced

None of these are surprising. What’s surprising is how often teams continue to treat them as operational edge cases rather than architectural invariants.

The Core Reframe

Most AI programs still frame governance as a layer you add after the system works.

In execution-capable systems, that order is inverted.

Governance is the mechanism by which the system is allowed to work.

A control surface is where that mechanism becomes real:

where permissions are scoped

where actions are logged by default

where execution can be paused, narrowed, or revoked

where accountability is encoded rather than debated

If these surfaces do not exist, trust is social. The system is architectural. The mismatch will surface as failure.

What “Good” Looks Like (Operationally)

A governable AI system is not one that never fails. It is one that:

fails locally rather than globally

can be interrupted without collateral shutdown

exposes actions and authority as inspectable state

assigns decision rights that match execution rights

contains blast radius by design

This is why control surfaces are not “compliance features.” They are resilience primitives.

What Changes When You Design for Control

The main misconception is that control surfaces slow systems down.

In practice, explicit control surfaces enable:

faster incident response (because intervention paths exist)

safer autonomy (because authority is scoped)

clearer ownership (because decision rights are explicit)

more durable deployments (because drift is detectable)

Teams that design control early ship faster later, because they avoid the recurring cycle of “successful automation → surprise → emergency governance.”

In the practitioner edition, this synthesis includes:

A short “control surface review” protocol for production readiness

A set of operator questions to evaluate delegation and lateral movement

A template for mapping responsibility to authority

These extensions do not change the conclusion. They make it repeatable.

The purpose of The Journal of Applied AI is not to track novelty or celebrate technical feats in isolation.

It exists to surface the structural conditions under which AI becomes durable infrastructure rather than temporary advantage.

That requires uncomfortable clarity: about boundaries, costs, controls, and responsibility.

In the next issue, we will examine governance drift: how control surfaces erode over time through convenience, scope creep, and quiet exceptions, until the system is effectively ungovernable again.

Thank you for reading. This journal is published by Hypermodern AI.