Every serious failure of an AI system eventually converges on the same demand.

A decision is questioned. A consequence must be justified. And the system is asked to demonstrate, precisely and reproducibly, why that decision occurred under those conditions and not others.

This moment is typically framed as a failure of explainability. The model is opaque. The reasoning is probabilistic. The internal state cannot be fully inspected. From this framing follows a familiar conclusion: modern AI systems are fundamentally incompatible with deterministic guarantees, regulatory expectations, or formal accountability.

That conclusion is wrong.

The inability to explain, reproduce, or justify an AI-driven outcome is rarely a property of the model itself. It is almost always a property of the architecture in which the model is embedded. More specifically, it is the result of systems that treat determinism as a reporting concern rather than as a design constraint.

Most production AI systems today are not designed as coherent decision systems. They are assembled from components optimized independently: data pipelines optimized for throughput, models optimized for benchmark performance, orchestration layers optimized for flexibility, and governance mechanisms added after deployment. Each component may behave correctly in isolation, yet the system as a whole becomes indeterminate when examined over time.

Inputs drift without versioning. Context is reconstructed dynamically. Feature generation occurs asynchronously. Authority is enforced at interfaces rather than encoded into state. Logs accumulate, but state must be inferred. Provenance exists as metadata rather than as a first-class artifact.

When such a system is asked to justify a historical decision, it cannot do so deterministically, not because intelligence is stochastic, but because the system itself lacks a stable definition of what decision was actually made.

Determinism Is Not Predictability

A persistent source of confusion in applied AI is the conflation of determinism with predictability.

Determinism does not require that outcomes be simple, fixed, or easily anticipated. It requires that outcomes be derivable from declared state, inputs, and rules. A deterministic system may produce complex or probabilistic outputs while remaining fully reproducible when those conditions are held constant.

In this sense, determinism is not a property of intelligence. It is a property of execution.

A deterministic system provides several guarantees:

System state is explicitly defined at all times

State transitions occur only through declared mechanisms

Inputs are versioned, validated, and scoped

Authority to act is bounded and inspectable

Side effects are governed rather than incidental

Historical state can be reconstructed without inference

None of these guarantees constrain the use of probabilistic models. They constrain the system in which those models operate.

From Logs to State: Where Explainability Actually Lives

Figure: Where explainability actually lives.

In non-deterministic systems, explanation is assembled from logs. In deterministic systems, explanation is derived from state.

Logs describe events. State defines reality.

Observability Is Descriptive, Not Normative

Over the past decade, engineering organizations have invested heavily in observability as a remedy for system opacity. Distributed tracing, structured logging, and metrics have become foundational infrastructure.

In AI systems, however, observability is frequently mistaken for control.

Observability can describe what happened. It cannot define what could not have happened. It operates after execution, not before it. It is descriptive rather than normative.

Regulators, auditors, and internal risk functions are not primarily interested in narratives. They are interested in boundaries. They want to know which actions were possible under which conditions, and why others were not.

A deterministic system answers these questions structurally. The system itself encodes which transitions are allowed, which are forbidden, and which must be recorded when they occur.

The Cost of Post‑Hoc Explanation

The operational cost of indeterminate architecture is familiar to practitioners.

Compliance reviews become forensic exercises. Engineering teams spend weeks reconstructing historical behavior. Slight discrepancies between environments invalidate reproductions. Data scientists are asked to explain outcomes using models that no longer exist in the same form. Organizational trust erodes as explanations change over time.

Most organizations respond by adding layers: more logging, stricter documentation requirements, manual approval gates, and human-in-the-loop processes intended to compensate for architectural uncertainty.

These measures increase friction without addressing the root cause. Explanation is treated as a reporting obligation rather than as an execution property.

Determinism as a Design Discipline

Figure: Determinism as an architectural property.

Treating determinism as an architectural property changes the design conversation.

Instead of asking how to explain a model’s behavior, architects ask how decisions are constructed. Instead of focusing on interpretability techniques, they define execution boundaries. Instead of accumulating traces, they establish authoritative state.

This shift has concrete implications:

Schemas function as executable constraints, not documentation

Pipelines become explicit state machines rather than opaque flows

Provenance is committed at transition time, not inferred later

Authority is encoded into data and execution context

Reproducibility is guaranteed by construction

In such systems, explanation is no longer narrative. It is derivation.

Why This Layer Is Still Missing

If determinism is so central, why is it so often absent?

AI capabilities have advanced faster than the systems required to govern them. Many organizations adopted AI opportunistically, embedding models into architectures that were never designed to carry decision authority. Early success was measured in performance metrics, not reproducibility or audit cost.

Determinism is also uncomfortable. It forces explicit decisions about authority, ownership, and responsibility. It removes plausible deniability. It exposes architectural shortcuts.

Yet these discomforts are precisely what make systems governable.

If a system cannot reproduce its own decisions, it does not control them.

That fact, not model opacity, is what ultimately determines whether AI can be trusted in production.

Deterministic systems do not explain themselves in prose. They explain themselves in structure. When state transitions are explicit, authority is bounded, and provenance is immutable, explanation becomes a property of execution rather than interpretation.

In the practitioner edition, this essay includes:

A failure-mode mapping table contrasting indeterminate and deterministic architectures

Concrete architectural commitments required to make decisions reproducible

Design implications for auditability, authority boundaries, and long-term governance

These extensions do not change the argument. They make its operational consequences explicit.

IMPLEMENTATION BRIEF

Why Observability‑First AI Fails Audits

Patterns & Failures

AI systems rarely fail audits because they behave incorrectly. They fail audits because they cannot demonstrate control.

In post‑mortem and compliance reviews, a recurring pattern emerges: teams can describe what happened, but cannot prove why it had to happen that way. The distinction is subtle but decisive. Regulators do not evaluate plausibility. They evaluate determinism.

This brief examines why observability‑first AI architectures consistently collapse under audit pressure, and what that failure reveals about deeper architectural assumptions.

The Observability Assumption

Most modern AI systems are designed with observability as the primary mechanism for accountability. Inputs are logged. Outputs are recorded. Intermediate reasoning steps may be captured. Traces and metrics are aggregated across services.

The implicit assumption is that, given sufficient telemetry, past decisions can be reconstructed with adequate fidelity to satisfy audit and regulatory requirements.

In practice, this assumption fails.

Observability is optimized for diagnosis, not proof. It answers the question “what happened?” after execution, but it cannot answer “what else could not have happened?” without additional structural guarantees.

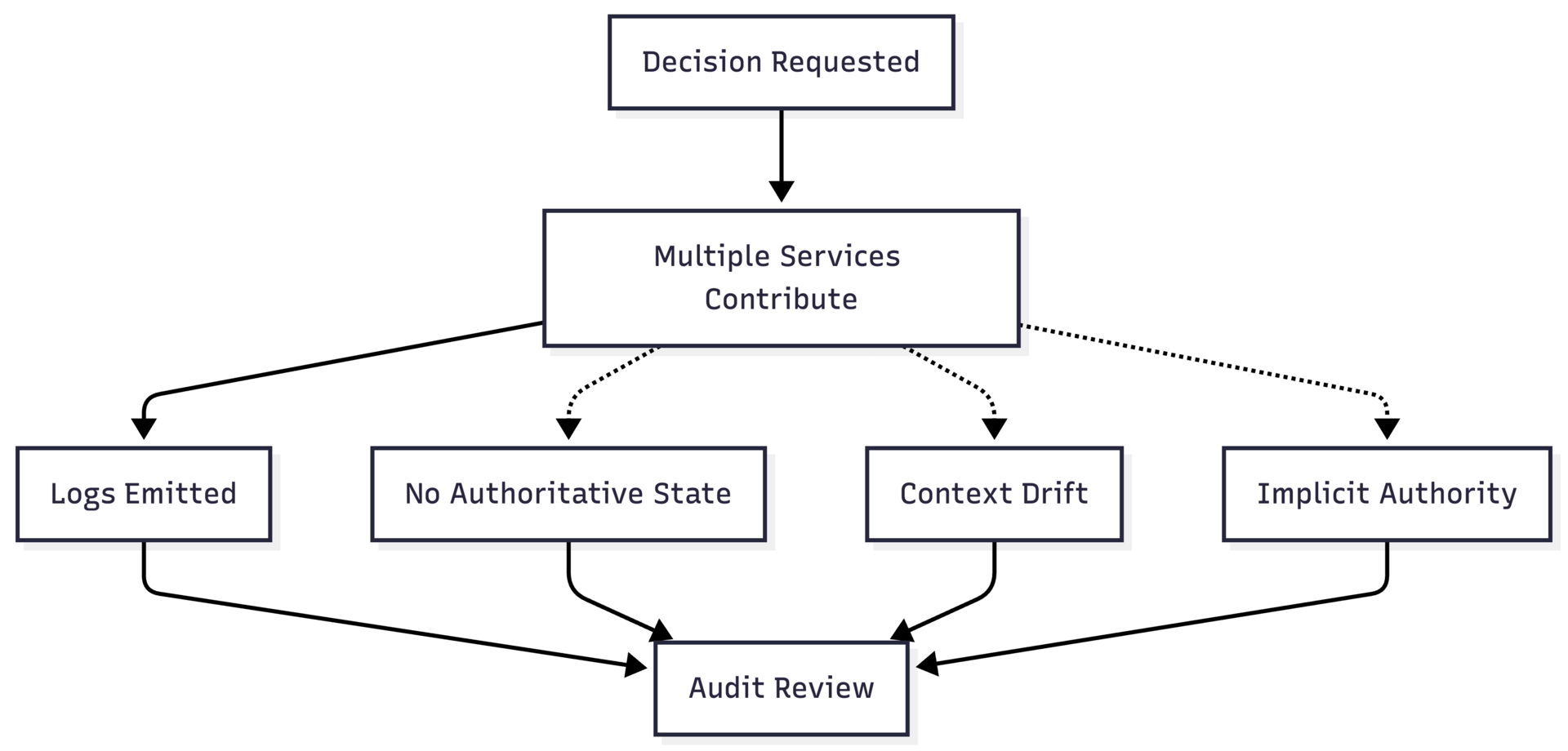

Where Audit Breakdown Occurs

Figure: Audit breakdown in an observability-first AI system.

Audit failure typically manifests at three pressure points:

State ambiguity — Logs describe events, not authoritative state. When multiple services contribute to a decision, there is no single, agreed-upon snapshot of system state at decision time.

Context drift — Inputs, features, and reference data change over time. Replaying a decision months later often requires approximating historical context rather than reproducing it exactly.

Implicit authority — Access controls indicate who can call a system, not who is authorized to make a specific decision under specific conditions.

Each of these failures forces auditors and internal reviewers into inference. Inference is precisely what regulated environments are designed to avoid.

Why More Logging Does Not Help

When observability proves insufficient, teams often respond by increasing instrumentation. More logs, finer‑grained traces, richer metadata.

This rarely improves audit outcomes.

Additional telemetry increases volume without resolving ambiguity. Logs remain interpretive artifacts. They still require narrative assembly. They still depend on assumptions about ordering, completeness, and intent.

In some cases, increased logging makes matters worse by introducing conflicting accounts of the same decision across services.

Determinism as the Missing Control Surface

Figure: Deterministic execution boundary with validated inputs and committed provenance.

Audit-resilient AI systems invert the observability-first model.

Instead of treating logs as the source of truth, they treat state as authoritative and logs as secondary artifacts. Decisions occur only through declared state transitions governed by executable constraints.

In such systems:

Inputs are versioned and validated before execution

Execution occurs within explicit state machines

Authority is encoded into execution context

Provenance is committed at transition time

Audits no longer depend on reconstruction. They depend on inspection.

Practical Signal for Architects

If an AI system cannot answer the following question deterministically, it is not audit-ready:

Given the recorded state at time t, could this decision have occurred differently?

If the answer relies on inference, approximation, or narrative explanation, the system’s architecture, not the model, is the liability.

Implication

Observability remains necessary. It is not sufficient.

Without deterministic execution boundaries, AI systems accumulate compliance risk regardless of model quality or intent. The earlier determinism is treated as a design constraint rather than a reporting obligation, the lower the long-term audit and governance cost.

This failure mode is not hypothetical. It is already the dominant reason AI initiatives stall at the boundary between pilot and production.

In the practitioner edition, this brief includes:

Alternative interface patterns that preserve genuine choice

Explicit decision thresholds and escalation points

Common implementation mistakes that accelerate silent delegation

These extensions do not prohibit delegation. They make it intentional.

FIELD NOTES

From Practice

When Auditability Is Assumed, Not Designed

This field note synthesizes a recurring failure pattern observed across multiple AI initiatives that reached late pilot or early production before stalling under audit and compliance review.

The systems involved differed in domain, scale, and tooling. The failure mode did not.

In each case, teams believed they had satisfied audit requirements because they had invested heavily in observability: detailed logs, trace IDs, prompt capture, and model outputs persisted for later review. What they lacked was an authoritative execution boundary.

By the time audit questions were formally asked, the systems could no longer answer them deterministically.

The Situation

The AI system in question was responsible for generating recommendations that materially influenced downstream decisions. Human review existed, but only as a post-hoc approval step. The system had already shaped options, prioritization, and timing.

From an engineering perspective, the architecture appeared sound:

Inputs were logged

Prompts were versioned informally

Model outputs were persisted

Service-level traces connected calls across components

From an audit perspective, none of this constituted a decision record.

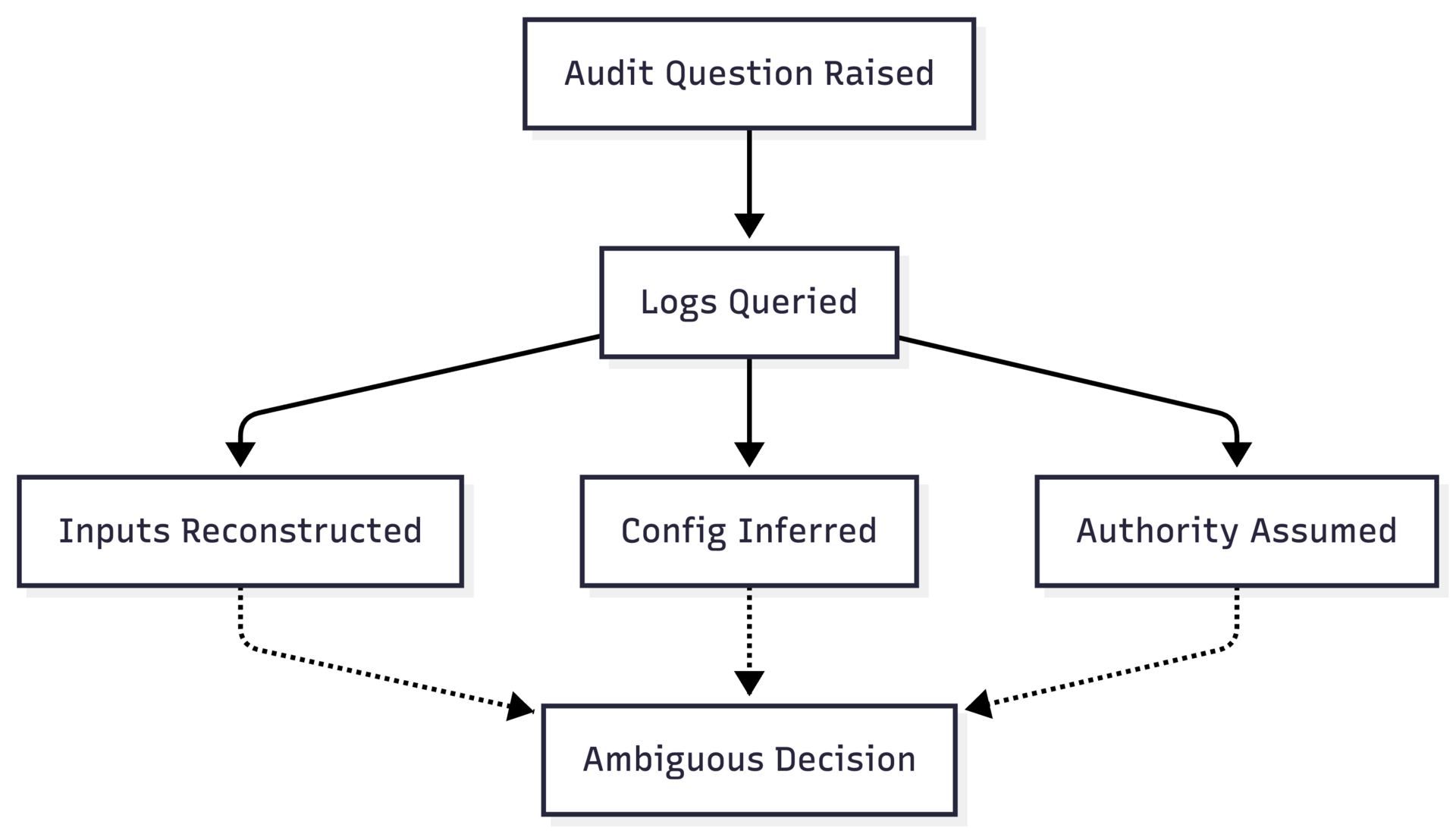

Where Review Failed

During audit review, a simple question was raised:

Why did the system make this recommendation instead of an alternative that was available at the time?

Answering this required reconstructing:

the precise inputs as seen by the model

the model configuration active at that moment

the authority under which the recommendation was allowed

the internal branching logic across services

Despite extensive logs, the reconstruction could not be completed with confidence.

Multiple explanations were plausible. None were provable.

Figure: Audit review attempting post-hoc reconstruction across distributed services.

Structural Cause

The failure was not a lack of transparency. It was a lack of determinism.

The system had no declared point at which:

inputs were validated as complete and authorized

execution was constrained to a single, explicit path

outcomes were committed as authoritative state

Instead, decision logic was distributed across services, configurations, and implicit assumptions. Authority was enforced socially rather than architecturally.

Audit review demanded inspection. The system could only offer reconstruction.Implication

The most dangerous phase in applied AI adoption is not large-scale rollout. It is the quiet middle period, where systems are widely used but still described as pilots.

This is where responsibility erodes without being reassigned.

Mapping to Known Failure Patterns

This field note corresponds directly to the failure pattern illustrated in Figure of the Issue 3 Implementation Brief: audit breakdown in an observability-first AI system.

The presence of extensive logs increased confidence during development, but delayed the discovery of architectural fragility until external scrutiny applied pressure.

Consequence

The system was not shut down because it produced incorrect outputs.

It was paused because no one could state, with authority, what it had been allowed to do.

Remediation required architectural changes, not additional logging. The project timeline absorbed this cost; the organization absorbed the risk.

In the practitioner edition, these field notes include:

A deterministic audit-boundary sequence diagram suitable for architecture review

Early warning signals that auditability is being assumed rather than designed

Remediation characteristics observed in successful refactors

These extensions do not change the narrative. They make the structural lessons operational.

VISUALISATION

Determinism Is a System Boundary, Not a Model Property

This visual essay reframes determinism as an architectural concern rather than a model characteristic. The diagrams below illustrate how determinism emerges, or fails, depending on where boundaries, authority, and state are enforced.

The intent is not to introduce new theory, but to make visible the structural differences between AI systems that survive governance pressure and those that do not.

From Requests to Decisions

Figure: Decision flow without an explicit execution boundary.

In this pattern, the system produces outcomes, but never commits a decision. Context, authority, and policy are inferred across steps rather than enforced at a boundary.

Introducing the Decision Boundary

Figure: Explicit decision boundary with validated inputs and authority.

Here, determinism is achieved not by eliminating probabilistic components, but by constraining when and how they may execute.

Authority as an Architectural Concern

Figure: Authority enforced by process vs authority enforced by execution context.

Systems that rely on process assume compliance. Systems that encode authority enforce it.

Determinism Over Time

Figure: Replayability across time using authoritative state.

Determinism is ultimately about time. If a system cannot answer the same question the same way later, given the same state, it is not deterministic in any meaningful sense.

In the practitioner edition, this visualization includes:

A failure-mode overlay mapping each diagram to common audit findings

A state-machine view of the decision boundary suitable for design reviews

Guidance on where to place boundaries in layered architectures

These extensions do not add diagrams for illustration. They turn diagrams into design instruments.

RESEARCH & SIGNALS

Purpose

This section highlights recent research, regulatory signals, and industry developments that materially affect how AI systems must be designed, governed, and audited in production environments.

The focus is not novelty, but operational relevance.

Signal 1: Explainability Is Being Reframed as Reproducibility

Across regulatory guidance, audit frameworks, and internal risk reviews, there is a visible shift away from demands for narrative explanations toward demands for decision reproducibility.

Rather than asking systems to justify outputs in human terms, reviewers increasingly ask whether a decision can be replayed, inspected, and constrained under the same conditions at a later time.

This reframing aligns with a deterministic view of auditability: explanations are secondary to authoritative state, validated inputs, and constrained execution paths.

Why it matters: Teams investing heavily in post-hoc explanation layers may be optimizing for a requirement that is quietly being replaced.

Signal 2: Stochastic Systems Are Colliding with Deterministic Obligations

Recent analysis of AI incidents and compliance reviews shows a consistent pattern: systems built around probabilistic components are being evaluated using deterministic standards inherited from traditional software and regulated decision systems.

The tension is not ideological. It is structural.

Organizations are discovering that stochastic behavior is acceptable within a bounded execution context, but unacceptable at the system boundary where authority, accountability, and compliance are enforced.

Why it matters: Treating probabilistic models as end-to-end decision-makers increases governance cost and slows deployment. Treating them as constrained components reduces both.Signal 3: Pilot Systems Are Persisting Indefinitely

Field reports show pilot deployments remaining active for months or years without formal transition into production.

These systems accumulate reliance without accumulating controls. They sit outside formal accountability structures while influencing real outcomes.

This persistence is a governance blind spot, not a technical one.

Signal 3: Audit Cost Is Emerging as a First-Class Economic Constraint

In multiple sectors, the limiting factor for AI deployment is no longer model performance or infrastructure cost, but the ongoing expense of audit, review, and compliance assurance.

Systems that rely on reconstruction, manual review, or narrative explanation accumulate recurring audit costs that scale with usage. Systems designed around deterministic boundaries amortize those costs upfront.

This economic signal is beginning to influence architecture choices earlier in the lifecycle.

Why it matters: Auditability is no longer a compliance checkbox. It is a cost driver that directly affects ROI.

In the practitioner edition, these research notes include:

A mapping between emerging regulatory language and concrete architectural requirements

Indicators that an organization is accruing hidden audit debt

Questions to assess whether explainability efforts are misaligned with audit expectations

These extensions do not add new signals. They translate signals into design and governance implications.

SYNTHESIS

Determinism as the Hidden Constraint

Issue 3 examined a recurring implementation failure that does not announce itself as a technical limitation, a governance gap, or a compliance error, but ultimately manifests as all three.

Across the lead essay, implementation brief, field note, and visual essay, a consistent pattern emerged: AI systems fail under real-world scrutiny not because they are opaque, but because they are indeterminate at the points where authority is exercised.

From Capability to Constraint

Much contemporary AI discourse frames determinism as a limitation, something to be traded off against flexibility, adaptiveness, or performance.

The material in this issue suggests the opposite framing is more useful in practice.

Determinism is not a property to be maximized everywhere. It is a constraint to be placed deliberately at system boundaries where decisions acquire consequences.

Where those boundaries are absent, organizations rely on inference, process, and explanation to compensate. Where they are present, stochastic components remain usable without compromising accountability.

Why Observability Was Not Enough

Several pieces in this issue showed how teams equate extensive logging with control.

Observability improves visibility after the fact. It does not determine what was permissible at the time of execution.

When audit, compliance, or accountability pressures arise, systems designed around reconstruction collapse into ambiguity. Systems designed around authoritative state invite inspection instead.

This distinction explains why adding more logs rarely resolves audit concerns, and often makes them worse.

Determinism as an Organizational Interface

A less obvious insight surfaced across the field note and visual essay: determinism mediates not just machines, but organizations.

Decision boundaries clarify where responsibility resides, who may act, and under what conditions. They reduce the need for interpretive coordination between engineering, legal, and operations teams.

In this sense, determinism functions as an interface between technical execution and institutional accountability.

What Carries Forward

Issue 3 does not argue for deterministic models or static systems.

It argues for deterministic commitments:

explicit validation of inputs and authority

constrained execution paths

authoritative state transitions

replayability over time

These commitments allow probabilistic components to exist without placing the organization at risk.

Closing

Most AI failures are discovered late, when systems meet governance, audit, or operational reality.

The work in this issue suggests those failures are predictable. They emerge wherever determinism is assumed rather than designed.

Treating determinism as a first-class system boundary is not conservative. It is what allows AI systems to operate at scale, under scrutiny, and over time.

The purpose of The Journal of Applied AI is not to track novelty or celebrate technical feats in isolation.

It exists to surface the structural conditions under which AI becomes durable infrastructure rather than temporary advantage.

That requires uncomfortable clarity: about boundaries, costs, controls, and responsibility.

In the next issue, we will examine control surfaces: where intervention, override, and escalation must exist in AI systems that operate continuously in production. The focus will shift from decision correctness to operational control under uncertainty, and what happens when systems cannot be stopped, steered, or safely degraded.

Thank you for reading. This journal is published by Hypermodern AI.